Moderate assets

Last updated: Apr-23-2025

It's sometimes important to moderate assets uploaded to Cloudinary: you might want to keep out inappropriate or offensive content, reject assets that do not answer your website's needs (e.g., making sure there are visible faces in profile images), or make sure that photos are of high enough quality before making them available on your website. Moderation can be especially valuable for user-generated content. Whether using the server-side upload API call, when allowing users to upload the assets directly from their browser, or on updating an existing asset, you can mark an asset for moderation by adding the moderation parameter to the call. The parameter can be set to:

-

perception_pointfor automatic moderation with the Perception Point Malware Detection add-on -

webpurifyfor automatic image moderation with the WebPurify's Image Moderation add-on -

aws_rekfor automatic image moderation with the Amazon Rekognition AI Moderation add-on -

aws_rek_videofor automatic video moderation with the Amazon Rekognition Video Moderation add-on -

google_video_moderationfor automatic video moderation with the Google AI Video Moderation add-on -

duplicate:<threshold>for automatic duplicate image detection with the Cloudinary Duplicate Image Detection add-on -

manualfor manually moderating any asset via the Media Library

Moderating assets on upload

To mark an image for Amazon Rekognition moderation:

Moderation response

The following snippet shows an example of a response to an upload API call, indicating the results of the request for moderation, and shows that the moderation result has put the image in 'rejected' status.

default_image parameter (d for URLs) when delivering the image. See the Using default image placeholders documentation for more details.Moderating existing assets

You can also request moderation for previously uploaded assets using the explicit API method.

The following example requests Perception Point Malware Detection for the already uploaded image with a Public ID of shirt as follows:

Moderating assets manually

You can manually accept or reject assets that are uploaded to Cloudinary and marked for manual moderation.

As some automatic moderations are based on a decision made by an advanced algorithm, in some cases you may want to manually override the moderation decision and either approve a previously rejected image or reject an approved one. You can manually override any decisions made automatically at any point in the moderation process. Afterwards, the asset will be considered manually moderated, regardless of whether the asset was originally marked for manual moderation.

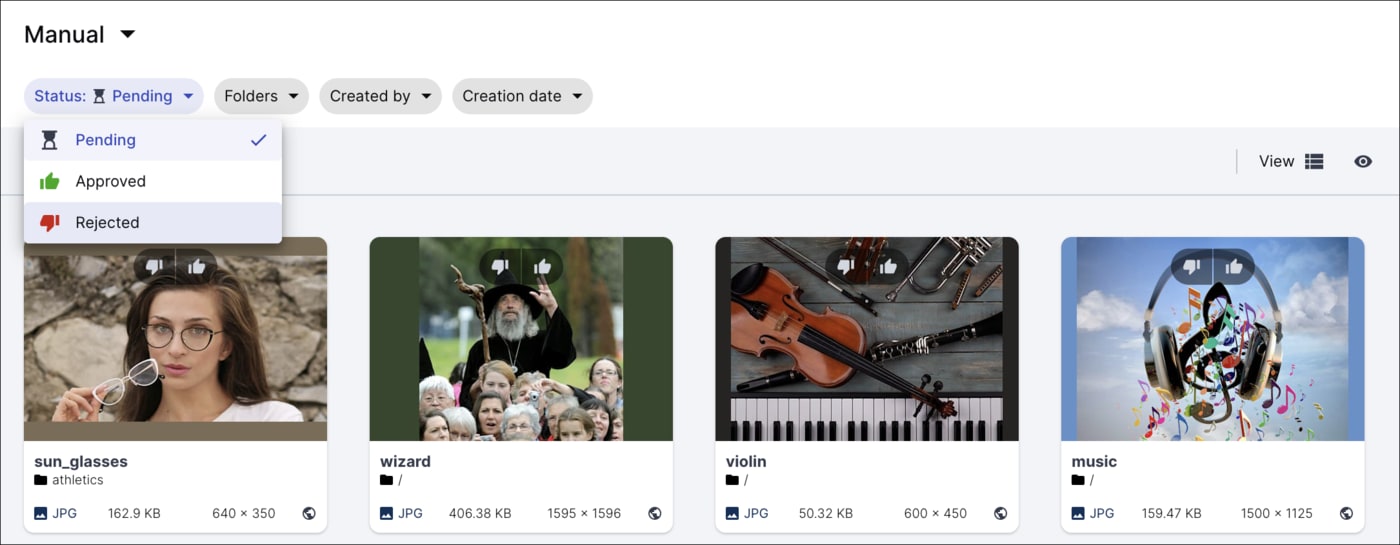

Moderating assets via the Media Library

Users with Master admin, Media Library admin, and Technical admin roles can moderate assets from the Media Library. In addition, Media Library users with the Moderate asset administrator permission can moderate assets in folders that they have Can Edit or Can Manage permissions to.

To manually review assets, select Moderation in the Media Library navigation pane. From there, you can browse moderated assets and decide to accept or reject them.

If an asset is marked for multiple moderations, you can view its moderation history to track its progress through the moderation process.

For more information, see Reviewing assets manually.

Moderating assets via the Admin API

Alternatively to using the Media Library interface, you can use Cloudinary's Admin API to manually override the moderation result. The following example uses the update API method while specifying a public ID of a moderated image and setting the moderation_status parameter to the rejected status.

Moderation notifications

You can add a notification_url parameter while uploading or updating an asset, which instructs Cloudinary to notify your application of changes to moderation status, with the details of the moderation event (approval or rejection). For example:

Multiple moderations

You can also mark an asset for more than one moderation in a single upload call. This might be useful if you want to reject an asset based on more than one criteria, for example, if it's either a duplicate or has inappropriate content. In that case, you might moderate the asset using the Amazon Rekognition AI Moderation as well as the Cloudinary Duplicate Image Detection add-ons. You should always add a notification URL when requesting multiple moderations.

An asset has a separate status for each moderation it's marked for:

-

queued: The asset is waiting for the moderation to be applied while preceding moderations are being applied first. -

pending: The asset is in the process of being moderated but an outcome hasn't been reached yet. -

approvedorrejected: The possible outcomes of the moderation. -

aborted: The asset has been rejected by another moderations that was applied to it. As a result, the final status for the asset isrejected, and this moderation won't be applied.

To use more than one moderation, set the moderation parameter to a string consisting of a pipe-separated list of the moderation types you want to apply. The order of your list dictates the order in which the moderations are applied. If included, manual moderation must be last.

For the first moderation in the list, the status of the asset is set to pending immediately on upload, and for all the other moderations requested, the status is set to queued. If the first moderation is approved, the next moderation is started and its status is set to pending.

This process continues until either the asset is rejected by a moderation, in which case any moderations still queued are now aborted and the final moderation status is set to rejected, or until all of the moderations are applied and approved. In that case, the final moderation status of the asset is approved.

In addition, a summary will be sent at the end of the moderation process informing you of the final outcome and the status of each individual moderation (pending, aborted, approved or rejected).

For example, to mark an image for moderation by the Amazon Rekognition AI Moderation add-on and the Cloudinary Duplicate Image Detection add-on, and to request notifications when the moderations are completed:

Multiple notification responses

When you mark an asset for multiple moderations and request notifications, messages will be sent to inform you of the outcome (approved or rejected) of each individual moderation when it resolves. In addition, a summary will be sent at the end of the moderation process informing you of the final outcome and the status of each individual moderation (pending, aborted, approved or rejected).

If the asset was marked for manual moderation: The summary with the asset's final status will only be sent once the user has manually approved or rejected the asset. Even if the asset is rejected by one of the automatic moderations, the asset status will remain pending until the manual moderation is complete.

If the asset wasn't marked for manual moderation: The summary will be sent either when the status of any one of the moderations changes to

rejected(making the final statusrejected), or as soon as the status of every one of the moderations isapproved(making the final statusapproved).

Video tutorial: Moderate images using the Node.js SDK

Watch this video tutorial to learn how to moderate images using the Node.js SDK: